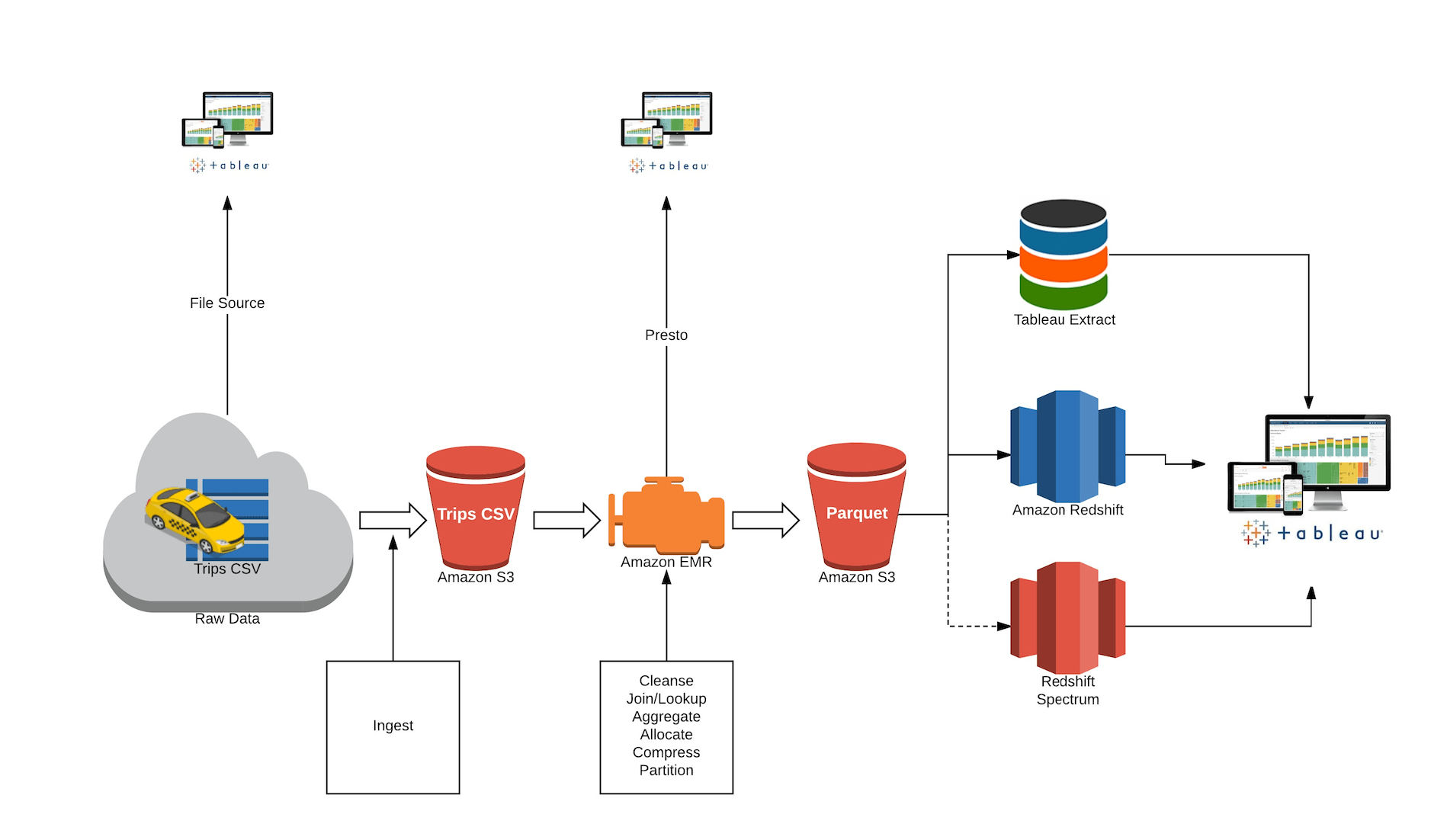

Like Amazon Redshift itself, you get the benefits of a sophisticated query optimizer, fast access to data on local disks, and standard SQL. You just pay for the resources you consume for the duration of your Redshift Spectrum query. Like Amazon Athena, Redshift Spectrum is serverless and there’s nothing to provision or manage. Like Amazon EMR, you get the benefits of open data formats and inexpensive storage, and you can scale out to thousands of nodes to pull data, filter, project, aggregate, group, and sort. We built Redshift Spectrum to end this “tyranny of OR.” With Redshift Spectrum, Amazon Redshift customers can easily query their data in Amazon S3. You need “all of the above.” Redshift Spectrum At this scale, you really can’t afford to choose. You can have fast join performance with optimized formats OR a range of data processing engines that work against common data formats. You can have sophisticated query optimization OR high-scale data processing. We see this as a “tyranny of OR.” You can have the throughput of local disks OR the scale of Amazon S3. But you do have to load data, and you have to provision clusters against the storage and CPU requirements you need.īoth solutions have powerful attributes, but they force you to choose which attributes you want. Because it is just standard SQL, you can keep using your existing ETL and BI tools. Amazon Redshift, in particular, leverages high-performance local disks, sophisticated query execution. These systems make it simple to run complex analytic queries with orders of magnitude faster performance for joins and aggregations performed over large datasets. You can also use a columnar MPP data warehouse like Amazon Redshift. And joins are intrinsic to any meaningful analytics problem. For example, join processing requires data to be shuffled across nodes-for a large amount of data and large numbers of nodes that gets very slow. On the other hand, they’re not that good at complex query processing. These systems are great at high scale-out processing like scans, filters, and aggregates. You can spin up clusters as you wish when you need, and size them right for that specific job you’re running. This is actually a pretty great solution because it makes it easy and cost-effective to operate directly on data in Amazon S3 without ingestion or transformation. You can use Hadoop-based technologies like Apache Hive with Amazon EMR. Let’s look at the options available today.

If your data is doubling every year, it’s not long before you have to find new, disruptive approaches that transform the cost, performance, and simplicity curves for managing data. There wasn’t a solution for customers like that. However, there is an increasing number of AWS customers who each generate a petabyte of data every day-that’s an exabyte in only three years. That gives our customers some time before they have to consider throwing away data or removing it from their analytic environments. That’s why we implement features that help customers handle their growing data, for example to double the query throughput or improve the compression ratios from 3x to 4x. Across the board, gigabytes to petabytes, the average Amazon Redshift customer doubles the data analyzed every year. Many customers, including Vevo, Yelp, Redfin, and Edmunds, migrated to Amazon Redshift to improve query performance, reduce DBA overhead, and lower the cost of analytics.Īnd our customers’ data continues to grow at a very fast rate. And Amazon Redshift was fully managed from the start-you didn’t have to worry about capacity, provisioning, patching, monitoring, backups, and a host of other DBA headaches. It was at least an order of magnitude less expensive and faster than most alternatives available. They were storing it with optimistic hope that, someday, someone would find a solution.Īmazon Redshift became one of the fastest-growing AWS services because it helped solve the dark data problem. Our customers knew that there was untapped value in the data they collected why else would they spend money to store it? But the systems available to them to analyze this data were simply too slow, complex, and expensive for them to use on all but a select subset of this data.

It was also true in the broader industry, where the growth rate of the enterprise storage market segment greatly surpassed that of the data warehousing market segment. We saw that this wasn’t just a cloud-specific anomaly.

When we first looked into the possibility of building a cloud-based data warehouse many years ago, we were struck by the fact that our customers were storing ever-increasing amounts of data, and yet only a small fraction of that data ever made it into a data warehouse or Hadoop system for analysis.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed